How Kimi K2.6 Took Over pi.dev: Why a Chinese Model Dethroned Claude

In late April 2026, the OpenRouter usage stats for pi — a minimalist open-source coding agent — changed sharply and without warning. Kimi K2.6 from China’s Moonshot AI jumped to first place by token volume, overtaking Claude Opus 4.7. Here’s what pi is, why the spike happened, and what it means.

What pi is — and how it differs from Claude Code

Pi is a minimalist open-source coding agent built by Mario Zechner (GitHub: badlogic) in February 2026. Its philosophy is captured in one line: “There are many coding agents, but this one is yours.” The entire system runs on four tools — read, write, edit, bash — and its system prompt is under 1,000 tokens.

By comparison, Claude Code’s system prompt weighs around 14,300 tokens and bundles plan mode, sub-agents, MCP, built-in web search, and multi-level permission sandboxing. Pi does none of that by default — only what you explicitly add through extensions. In exchange, it supports over 260 models through OpenRouter, the Anthropic API, OpenAI, Google, Ollama, and more.

By the end of April 2026, pi had processed 114 billion tokens in the past 30 days, ranking 9th globally by daily usage and 5th among coding agents. For a tool launched just two months earlier, that’s a significant footprint — driven almost entirely by its model-agnostic design.

April 20: the day the chart broke

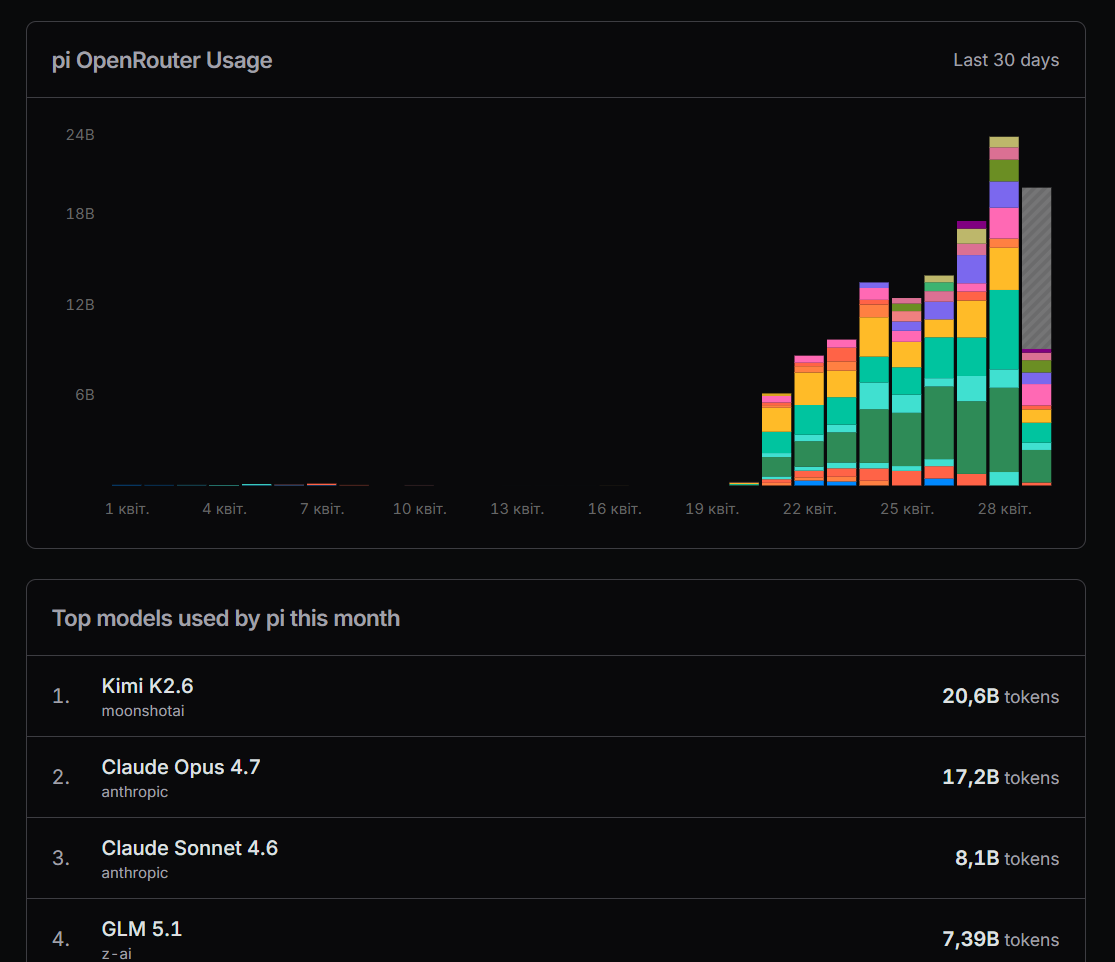

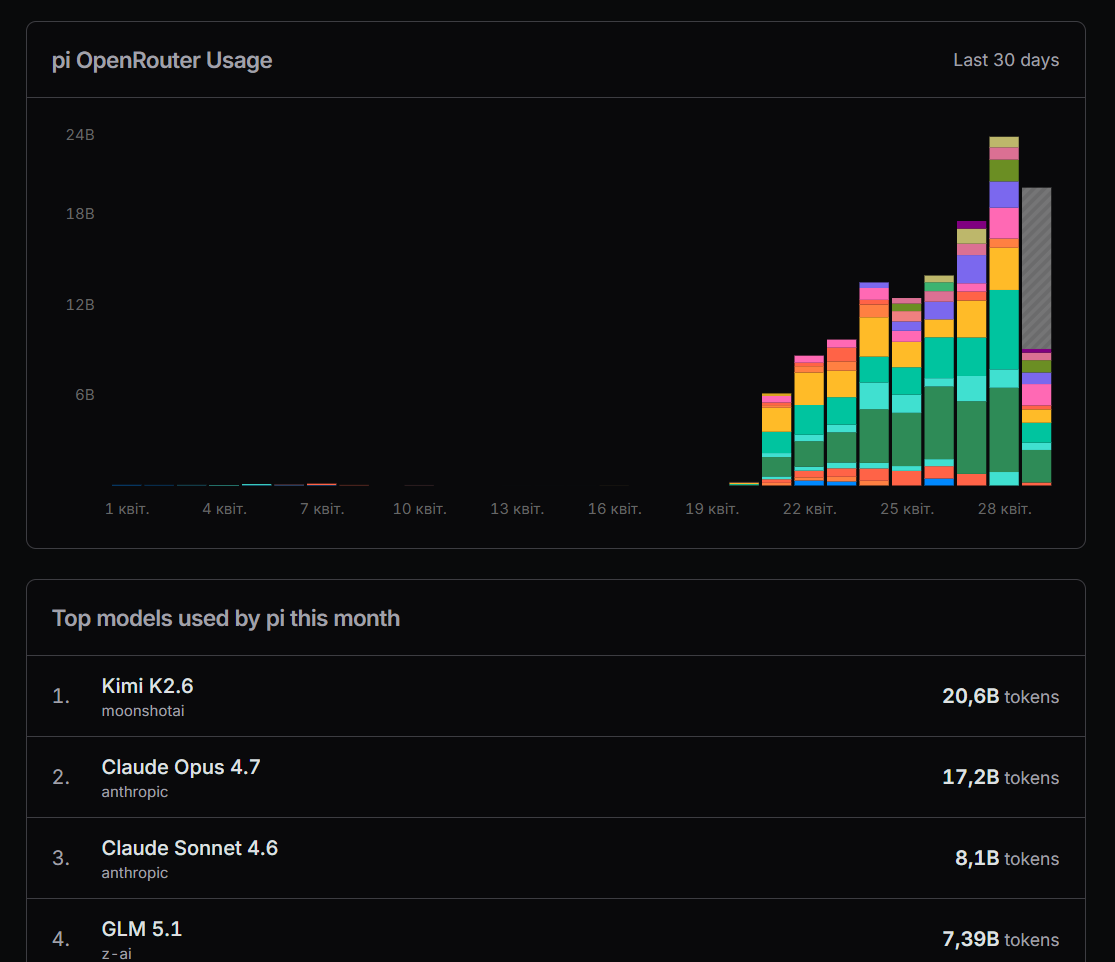

The pi usage chart on OpenRouter tells a single story. From the start of April through the 19th, token volume was minimal and flat. On April 20, 2026, the curve turned vertical: over eight days, daily volume went from near zero to 24 billion tokens.

April 20 is also the day Moonshot AI removed the “Preview” label from Kimi K2.6 and shipped it as a generally available model. The timing is not a coincidence — a powerful, affordable coding model launched, and pi turned out to be the fastest way to start using it.

Kimi K2.6: what the model is and why it matters

Kimi K2.6 is an open-weight multimodal agentic model from Moonshot AI. Architecture: 1 trillion total parameters, with 32 billion activated per token using Mixture of Experts (MoE). Context window: 262,144 tokens, with native support for text, images, and video.

The model is built for autonomous operation: it supports 12-hour unattended coding sessions, coordinates up to 300 parallel sub-agents, and handles up to 4,000 coordinated steps within a single task. On SWE-Bench Pro — one of the main practical coding benchmarks — Kimi K2.6 scores 58.6. On HLE-Full (Humanity’s Last Exam with tools), it reaches 54.0, ahead of GPT-5.4 (52.1) and Claude Opus 4.6 (53.0).

| Metric | Value |

|---|---|

| Total parameters | 1T (32B active per token) |

| Context window | 262,144 tokens |

| SWE-Bench Pro | 58.6 |

| HLE-Full (with tools) | 54.0 — #1 vs GPT-5.4, Claude Opus 4.6 |

| Release date | April 20, 2026 |

Price: the real reason for mass adoption

Performance matters, but the factor that drove adoption at this speed is cost. Kimi K2.6 through the API is priced at $0.60 per million input tokens and $2.50 per million output tokens. Claude Sonnet 4.6 costs $3.00 / $15.00. That’s 5? cheaper on input and 6? cheaper on output.

| Model | Input ($/1M tokens) | Output ($/1M tokens) |

|---|---|---|

| Kimi K2.6 | $0.60 | $2.50 |

| Claude Sonnet 4.6 | $3.00 | $15.00 |

| Claude Opus 4.7 | $15.00 | $75.00 |

| GPT-5.4 | $10.00 | $30.00 |

In autonomous coding mode, pi burns through tokens quickly on large tasks. At that scale, a 5? price difference isn’t a rounding error — it’s the difference between a session that costs $3 and one that costs $15. That’s why Kimi K2.6 leads by total token volume, not just by number of requests.

Top 10 models on pi — April 2026

| # | Model | Provider | Tokens |

|---|---|---|---|

| 1 | Kimi K2.6 | Moonshot AI | 20.6B |

| 2 | Claude Opus 4.7 | Anthropic | 17.2B |

| 3 | Claude Sonnet 4.6 | Anthropic | 8.1B |

| 4 | GLM 5.1 | Z-AI | 7.39B |

| 5 | DeepSeek V4 Flash | DeepSeek | 6.53B |

| 6 | MiniMax M2.7 | MiniMax | 5.45B |

| 7 | GPT-5.4 | OpenAI | 3.6B |

| 8 | DeepSeek V4 Pro | DeepSeek | 3.53B |

| 9 | Claude Opus 4.6 | Anthropic | 3.44B |

| 10 | Qwen3.6 Plus | Qwen | 3.4B |

Why pi became the natural home for Kimi

Claude Code is a closed system with hardcoded permissions, a sandbox, and command pre-screening through a secondary Haiku model. Switching the underlying model is not an option — it runs on Anthropic models only. Pi is the structural opposite: any model, any provider, no built-in constraints.

When a new capable and affordable model appeared, developers already using pi could switch to it by changing one line of config. Claude Code offers no such path. This structural flexibility turns pi into the default testing ground for every new model that hits the market — which is exactly what happened here.

A model that costs 5? less and outperforms competitors on key benchmarks doesn’t just “gain popularity” — it changes the economics of daily use.

What this means for the AI agent market

Pi’s April 2026 numbers illustrate several things happening simultaneously. First, open-weight models are no longer a tier below closed ones on practical coding tasks — Kimi K2.6 is a legitimate benchmark competitor at a fraction of the price. Second, model-agnostic agents have a structural edge: every new model release is an upgrade for pi users, while Claude Code users wait for Anthropic.

Third — and perhaps most tellingly — Chinese AI companies hold 6 out of 10 spots in pi’s top model list: Moonshot AI, DeepSeek (twice), MiniMax, Z-AI, and Qwen. That’s no longer an experiment. It’s the new normal.

Usage data sourced from public OpenRouter statistics for the pi app, April 2026. Prices and benchmarks current at time of publication.

Leave a Reply

You must be logged in to post a comment.